Technical hire failures in the first year: the 4-stage loop that separates signal from resume optimization

If 90% of companies missed their hiring goals last year, the problem isn't talent scarcity. It's evaluation quality.

I've been watching startups spend $120K-$200K per year on technical hires who flame out in the first twelve months. The pattern is consistent: optimized resume passes screening, candidate demos well in one technical round, offer goes out. Six months later, the engineer can't debug production issues, misses deadlines, or doesn't integrate with the team. The founder blames the candidate. I blame the interview process.

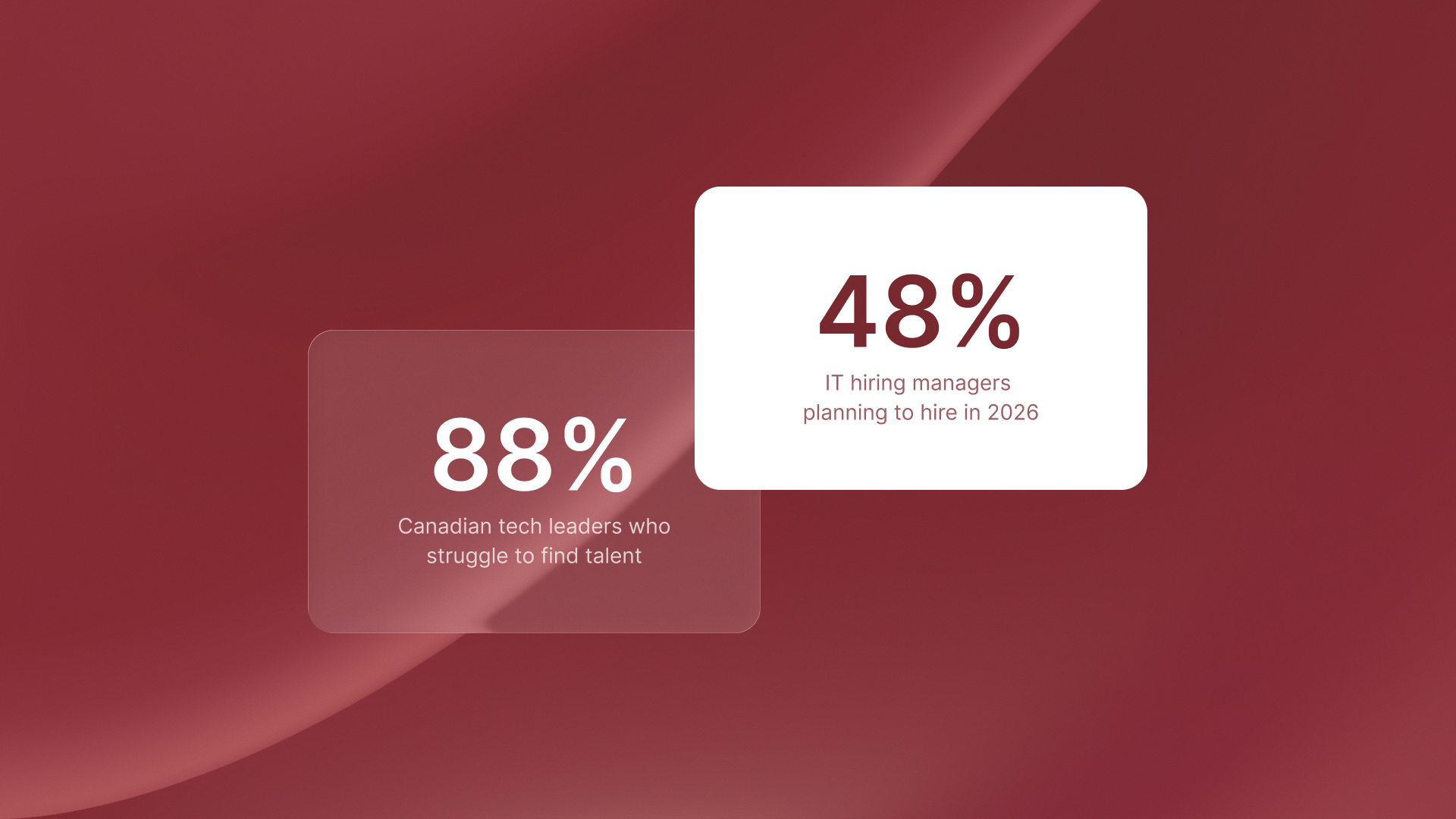

According to GoodTime, 90% of companies missed their hiring goals in 2025, with 1 in 3 missing by a wide margin. The core issue: skills misalignment between what resumes promise and what candidates actually deliver. Add AI-generated resumes as the #1 threat for 2026, and traditional resume screening collapses entirely. Single-round technical interviews can't catch optimization theater. Understanding technical hire failures in the first year requires looking past the resume to the evaluation process itself.

The execution gaps behind technical hire failures first year

The hiring crisis isn't about candidate supply. It's about interviewer readiness gaps and evaluation shortcuts that make the AI-generated resumes hiring problem worse.

Untrained hiring managers

Most engineering managers conduct interviews without structured evaluation criteria. They rely on gut feel: does the candidate seem smart, do they use the right buzzwords, would I want to work with them?

That's not assessment. That's coin-flipping with a $150K budget.

I've seen companies hire senior backend engineers who couldn't debug a production issue three months into the role. The interviewer asked about microservices architecture. The candidate described their experience in detail. But nobody verified they could actually solve the problems that architecture was supposed to fix. The structured interview process would have caught this. A single conversation didn't.

Inconsistent evaluation criteria

One interviewer tests algorithm design. Another asks behavioral questions. A third focuses on culture fit. No coordination, no shared rubric, no way to combine the feedback.

When decision time comes, everyone votes based on different criteria. The hire becomes a popularity contest, not a capability screen. GrowTal's hiring framework GrowTal's hiring framework helps non-technical managers evaluate specialists. It shows that portfolio reviews and scenario-based interviews cut placement risk by 60%. This is compared to resume-based selection. The same principle applies to technical hiring: structured evaluation beats gut instinct.

Rushed timelines

Startups fear losing candidates to competing offers, so they compress timelines. Two interviews, 48-hour decision cycle, offer extended.

The speed doesn't eliminate risk. It concentrates risk into fewer evaluation moments. You get one or two data points to make a $150K-$200K decision. Each round becomes critical while interviewers have less time to prepare. Traditional hiring fails 70% of the time at growth-stage companies, according to GrowTal's fractional marketing analysis. The pattern extends beyond marketing roles to every function where rushed evaluation replaces systematic vetting.

Why AI-generated resumes hiring creates a new filter challenge

Resumes used to signal capability. Now they signal optimization skill.

AI tools can create keyword-rich resumes. These resumes pass ATS filters and impress human screeners. This is true even without hands-on experience. The disconnect shows up in technical rounds. A candidate describes a system they "architected" in detail, using all the right terminology. But when you ask them to debug a specific edge case or explain a tradeoff decision, they stumble.

The resume was real. The experience wasn't.

Traditional one-round technical screens can't catch this. You need sequential evaluation across multiple problem dimensions to separate memorized patterns from actual problem-solving ability. AI tools for small business show how automation creates leverage across operations. But hiring remains a judgment problem. Automation can screen resumes, but only structured human evaluation can verify capability.

The 4-stage interview loop framework that reduces mis-hire risk

A 4-stage interview loop forces candidates to demonstrate capability across cultural fit, technical execution, systems thinking, and decision-making under constraints. Each stage filters a different failure mode.

Here's how many interview stages reduce mis-hire risk: four distinct evaluation moments, each targeting a specific capability gap.

Stage 1: Cultural fit and communication (30 minutes)

A team member conducts this stage, not the hiring manager. Focus: how does the candidate explain technical concepts to non-technical stakeholders? Do they ask clarifying questions before jumping to solutions? Can they adapt communication style based on audience?

This stage catches people who can code but can't collaborate. If your startup needs engineers who interface with customers or cross-functional teams, this screen is non-negotiable. Fragmented tool workflows waste 15+ hours monthly through context switching. The same principle applies to teams: engineers who can't communicate create coordination overhead that compounds across every project.

Stage 2: Technical assessment (60-90 minutes)

Real problems from your stack. Not whiteboard algorithms. Actual bugs from your codebase, architectural decisions you faced last quarter, or production incidents you debugged.

This stage separates hands-on capability from resume keywords. Autonomous testing platforms like QA flow cut manual QA work. They remove the 3-6 month hiring delay, which can hide $600K in yearly costs. But human judgment still matters for evaluating how engineers approach unfamiliar problems under realistic constraints.

Your technical assessment should mirror actual work conditions: incomplete requirements, legacy code, time pressure, production incidents. If the role requires debugging distributed systems at 2am, test that capability directly.

Stage 3: Systems thinking (45 minutes)

Present a scaling problem: traffic spike, database bottleneck, API rate limits. Ask the candidate to design a solution. Evaluate their ability to balance tradeoffs: cost vs performance, complexity vs maintainability, build vs buy.

This stage reveals whether someone thinks in systems or just writes code. Senior hires especially need to pass this screen. I've watched companies hire "senior" engineers who could implement features but couldn't architect solutions. Three months later, technical debt compounds and the team slows down. AI business case failures show that 42% of initiatives fail because teams optimize for demos rather than production systems. The same pattern appears in hiring: candidates who demo well but can't design for production constraints.

Stage 4: Decision-making under constraints (30 minutes)

Scenario: limited budget, tight deadline, conflicting stakeholder priorities. How does the candidate prioritize? What do they cut, what do they protect, and how do they communicate the plan?

This stage tests judgment. Technical skill gets you to solutions. Judgment decides which solution to build. 80% accuracy in AI time tracking forces manual corrections that consume more time than automation saves. The lesson: precision matters more than speed. The same principle applies to hiring decisions. A slower, structured process that reduces mis-hire risk beats a fast process that concentrates risk.

How compliance complexity mirrors hiring complexity

Every startup faces the same structural challenge: one-size-fits-all processes break at scale.

Compliance traps when hiring Canadian tech talent show how provincial rule variations create costly mistakes. Statutory holidays vary by province. Termination notice periods don't match U.S. at-will assumptions. Mandatory benefits differ across locations. The pattern: generic templates fail when local variations matter.

Hiring follows the same logic. Generic interview processes fail when role-specific capabilities matter. A one-size-fits-all technical screen can't evaluate backend engineers, frontend developers, and DevOps engineers with equal precision. You need role-specific evaluation criteria mapped to actual job requirements.

What to do next

Audit your current interview process:

- How many evaluation stages do you have?

- What specific criteria does each stage test?

- Are interviewers trained on those criteria?

- Do you aggregate feedback systematically or rely on gut votes?

Define evaluation criteria per stage:

- Write down the 3-5 specific signals each interview round should capture

- Create scoring rubrics (1-5 scale) for each signal

- Train interviewers to use the rubric consistently

Implement the 4-stage loop starting with your next hire:

- Stage 1: Cultural fit (30 min)

- Stage 2: Technical assessment (60-90 min)

- Stage 3: Systems thinking (45 min)

- Stage 4: Decision-making under constraints (30 min)

Track outcomes:

- Measure first-year retention rate before and after process changes

- Calculate cost per mis-hire (salary + equity + opportunity cost + team morale impact)

- Compare hiring velocity: does structured evaluation slow you down or speed you up by reducing do-overs?

Reducing one mis-hire per year saves $120K-$200K in startup costs. AI-generated resumes are already in your pipeline. Unstructured processes can't catch them. The fix isn't hiring more candidates. It's evaluating better.

Ready to build a structured hiring process that reduces mis-hire risk? Book a free consultation to see how Shoreline helps startups build evaluation frameworks. These frameworks separate real skill from resume optimization.

.png)

.png)

%2520(8).png)